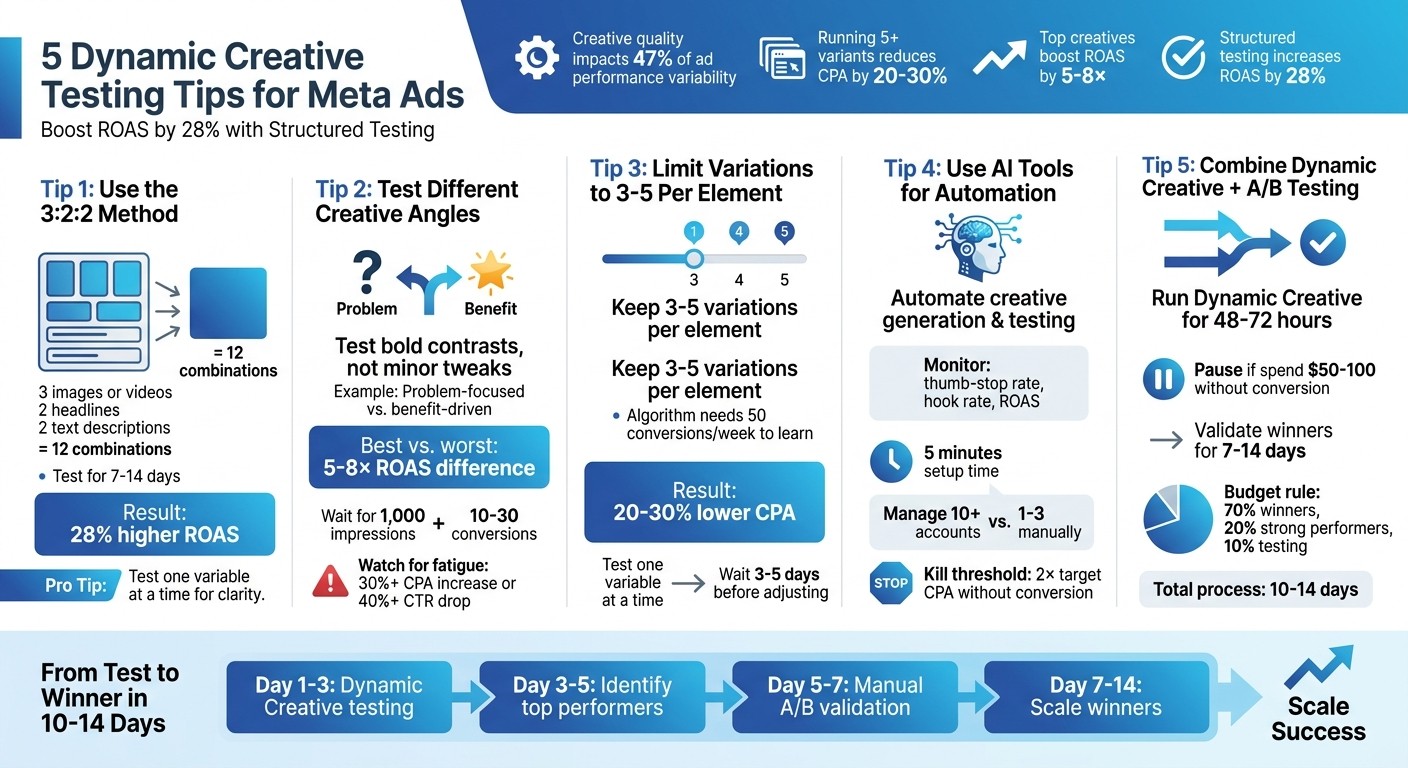

5 Dynamic Creative Testing Tips for Meta Ads

Use the 3:2:2 method, limit variations, test bold angles, and combine AI with A/B to lower CPA and raise ROAS.

Meta’s Dynamic Creative feature makes it easier to test ad combinations like images, headlines, and CTAs to find what works best. Since creative quality impacts 47% of ad performance variability, testing is essential. Running 5 or more variants can reduce CPA by 20–30%, and top-performing creatives can boost ROAS by 5–8×. Here’s how you can lower creative testing costs and optimize your testing process:

Follow the 3:2:2 testing method: Use 3 images or videos, 2 headlines, and 2 text descriptions to create 12 combinations. Test for 7–14 days and use Ad Set Budget Optimization (ABO) for even exposure.

Experiment with Different Angles: Test bold, contrasting concepts instead of minor tweaks, like problem-focused vs. benefit-driven ads.

Limit Variations: Stick to 3–5 options per element to avoid diluting results or spreading your budget too thin.

Leverage AI Tools: Platforms like AdAmigo.aiautomate creative generation, testing, and performance tracking, saving time and effort.

Combine Dynamic Creative with A/B Testing: Use Dynamic Creative for quick insights, then validate top performers through manual A/B testing.

5 Dynamic Creative Testing Tips for Meta Ads Performance Optimization

Should You Use Dynamic Creative in Meta Ads Here’s the Real Answer

1. Use the 3:2:2 Dynamic Method

The 3:2:2 method is a structured approach designed to give Meta's algorithm just the right amount of variety without spreading your budget too thin. Here’s how it works: upload 3 creative assets (like images or videos), 2 headlines, and 2 primary text descriptions into a single Dynamic Creative ad set. Meta's algorithm will then mix and match these elements, creating 12 unique ad combinations. These combinations are shown to different audience segments, helping the system pinpoint which ones perform best. This setup ensures enough variation to test effectively while keeping each asset's performance strong.

To strike a balance between reach and meaningful results, aim for 3–5 distinct variations per element. The RedClaw Performance Team emphasizes the importance of a structured testing process, noting that advertisers who follow such a framework see a 28% higher ROAS compared to those who test randomly.

When crafting your creatives, make sure they’re distinct. For example, you could use a product demo, a lifestyle shot, and a customer testimonial. Similarly, vary your headlines and descriptions by testing different value propositions, such as “Free Shipping” versus “30-Day Returns.”

Run your tests for 7–14 days to account for daily fluctuations and give the algorithm enough time to learn. While it’s fine to check early results after 48–72 hours, hold off on making major changes until the 3–5 day mark. If a creative achieves a 3×+ ROAS or hits a CPA below your target, move it into a scaling campaign like Advantage+ Shopping. Meanwhile, continue testing new creative ideas separately.

For better data collection, use Ad Set Budget Optimization (ABO) instead of CBO vs. manual budgeting. This ensures that each variation gets equal exposure early on, improving your chances of higher ROAS. Use this method as your starting point before diving into more creative strategies.

2. Test Different Creative Angles

When it comes to Meta's dynamic creative performance, experimenting with a variety of creative approaches can make a noticeable difference.

Small cosmetic changes won’t move the needle much. Instead, testing completely different creative concepts can deliver a 3–8× boost in ROAS. This highlights just how important your choice of concept is in determining campaign success. The key takeaway? Boldly explore distinct creative ideas rather than settling for minor tweaks.

For example, try testing a problem-focused ad against a benefit-driven one. Think along the lines of: "Tired of wasting money on ads that don't convert?" versus "Here's how to double your ROAS." You could also compare testimonial-based ads with educational how-to content. The RedClaw Performance Team found that the best-performing creative in a test can outperform the weakest by a staggering 5–8× in ROAS.

To avoid common testing mistakes and ensure you’re isolating the creative concept as the variable, keep everything else consistent - audience targeting, budget, call-to-action, and landing page should remain unchanged. Tests should run for 7–14 days, and it’s best to wait until each variant hits at least 1,000 impressions and 10–30 conversions before declaring a winner. Avoid drawing conclusions too early, as the first three days often fall within the campaign’s learning phase.

Once you’ve identified a winning creative angle - typically one that generates 30+ conversions while keeping CPA at or below your target - roll it into your main campaigns or Advantage+ Shopping Campaigns. Keep testing fresh ideas to maintain momentum. Watch for signs of creative fatigue, such as a 30%+ increase in CPA or a 40%+ drop in CTR, to know when it’s time to refresh your approach.

3. Limit Variations to 3-5 Per Element

When it comes to creative testing, keeping variations within a range of 3 to 5 for each element makes a big difference in maintaining clarity and optimizing performance. Testing too many variations can stretch your budget thin and muddy your data. As AdStellar AI explains:

"Three variations give you enough data points to identify patterns. Five variations provide statistical robustness without overwhelming your budget or diluting your audience".

Meta's algorithm supports this approach, as it needs about 50 conversions per ad set per week to exit the learning phase and operate effectively. If you test too many variations, impressions spread too thinly across options, which can hinder performance. Advertisers sticking to the 3-to-5 range often see 20–30% lower CPA compared to those testing just 1 or 2 variations.

A smart way to test is by isolating one variable at a time. For instance, if you're experimenting with headlines, keep the image and CTA consistent across all versions. This way, testing 5 headlines with the same image gives you focused insights. On the other hand, mixing 5 headlines with 5 images creates 25 combinations, making it almost impossible to pinpoint what’s actually working.

Once you've gathered insights from these controlled tests, automated bid rules can help you stay efficient. For example, set rules to pause any ad that spends more than twice your target CPA without converting. Make sure to let your tests run for at least 3 to 5 days before making adjustments, as tweaking too early can reset the learning phase and delay meaningful results.

After identifying a winning variation - one that hits your conversion goals while staying within your CPA target - move it into a scaling campaign. Use a larger budget and a broader audience for this phase. And don’t stop there; as soon as you scale a winning variation, start testing a new element to keep your momentum going.

4. Use AI Tools for Creative Generation and Bulk Testing

Relying solely on manual creative testing is exhausting and time-consuming. AI tools for Facebook and Instagram ads step in to simplify the entire process - handling creative generation, campaign launches, and performance tracking with ease. These platforms streamline everything, from creating brand-aligned ads to running bulk tests and monitoring their success.

Take AdAmigo.ai, for example. It analyzes your top-performing ads and even your competitors' creatives, then produces variations that maintain your brand's style. Forget spending hours tinkering in Ads Manager; with tools like this, you can simply upload assets to Google Drive, provide a quick brief, and let the AI handle the rest. It automates tasks like writing ad copy, structuring ad sets, and publishing directly to your Meta account. What used to take hours can now be done in minutes. Plus, you can test multiple variations at once without overwhelming your team, making it a perfect complement to structured testing methods.

The impact on performance is hard to ignore. AI tools monitor key metrics such as thumb-stop rate, hook rate, and ROAS, helping you identify winning creatives early - before draining your budget. They even tag creative elements like hooks, colors, and characters, giving you insight into which factors are driving results.

Setting up these tools is quick - just five minutes. Connect your Meta account, set your KPIs and budget limits, and choose whether you want manual approvals or full AI autonomy. From there, the AI takes over, continuously monitoring campaigns, reallocating budgets, pausing underperforming ads, and scaling successful ones far faster than manual management ever could. With these tools, a single media buyer can manage over 10 accounts simultaneously, compared to just 1–3 accounts using traditional methods.

To safeguard your budget, establish clear kill thresholds and guardrails - for instance, pausing any ad that spends twice your target CPA without converting. As the AI gathers more data, its decision-making sharpens, creating a snowball effect where performance improves steadily over time.

5. Combine Dynamic Creative with Manual A/B Oversight

Dynamic Creative can quickly test multiple ad combinations to find what works, but pairing it with manual A/B testing helps confirm which elements truly drive performance. A hybrid approach lets you take advantage of Dynamic Creative’s speed while ensuring your results are backed by solid data. Here’s how you can blend both methods to refine your ad strategy.

Start by running Dynamic Creative for 48 to 72 hours, using 3 to 5 variations per element. Monitor performance closely - pause any combination that spends $50–$100 without a single conversion or goes beyond twice your target CPA. Once you spot a strong performer, move it into a manual A/B test. Here, you’ll isolate one variable at a time. For instance, keep the image and call-to-action the same but test two different headlines. Run this test for 7 to 14 days to account for Meta’s learning phase and daily fluctuations. Make sure you reach at least 1,000 impressions and 10–30 conversions per variant before declaring a winner.

"Advertisers who follow a structured creative testing process achieve 28% higher ROAS than those who test randomly, because they identify and scale winners faster while killing losers earlier." - RedClaw Performance Team

Once you’ve validated your top performers, move them into a dedicated "Winners" campaign or an Advantage+ Shopping Campaign. Use the 70-20-10 budget rule: allocate 70% of your budget to proven winners, 20% to recent strong performers, and 10% to testing new concepts. From start to finish, the process - from initial Dynamic Creative testing to a validated winner - typically takes 10 to 14 days.

Tools like AdAmigo.ai simplify this process by letting one media buyer manage over 10 accounts at once. These platforms handle Dynamic Creative tests, pause underperforming ads based on your criteria, and validate winners through structured A/B testing. This frees you up to focus on strategy while ensuring your creative decisions are based on reliable data and scalable processes.

Conclusion

Dynamic creative testing thrives on systematic evaluation rather than guesswork. The five tips outlined here provide a clear path to identify winning ads more quickly and scale them with confidence. Start by applying the 3:2:2 method to structure your tests. Prioritize creative angles capable of shifting ROAS by 3–8×, and keep variations limited to 3–5 per element. This approach ensures reliable results without draining your budget. By isolating variables effectively, you’ll pinpoint exactly which headlines or visuals drive performance. Together, these strategies simplify the process of testing and scaling your ads.

Data backs up the benefits of structured testing. Research indicates it can increase ROAS by up to 28% and reduce CPA by 20–30%. On Meta platforms, creative quality influences 47% of ad performance variability, with top-performing creatives achieving ROAS gains of 5–8×.

While manual optimization restricts most marketers to managing just 1–3 accounts, AI-powered tools like AdAmigo.ai change the game. These tools handle creative generation, bulk ad launches, budget tweaks, and performance monitoring 24/7. This automation frees you to focus on strategy while your campaigns gain momentum.

To maintain strong performance, establish clear guardrails. Pause any ad that exceeds 2× your target CPA without conversions, promote proven winners to scaling campaigns, and refresh creatives every 21–28 days to avoid creative fatigue. Continuously feed fresh ideas into your testing pipeline. Success on Meta doesn’t belong to those with the largest budgets - it belongs to those who test smarter, scale faster, and cut underperforming ads early.

FAQs

How do I know I have enough data to pick a winner?

When deciding on a winning ad, look for statistically significant differences in key metrics such as ROAS (Return on Ad Spend), CTR (Click-Through Rate), or conversion rate. This usually happens after testing several variations and spotting consistent trends. A typical testing period spans about 21–28 days, giving enough time to collect reliable performance data. Make sure you've accumulated enough insights to make a well-informed choice.

Should I use ABO or CBO for Dynamic Creative tests?

CBO, or Campaign Budget Optimization, is a go-to strategy when running dynamic creative tests on Meta. This feature allows Meta's algorithm to automatically distribute your budget across different ad variations. By doing so, it ensures that more resources go to the top-performing ads, ultimately helping you get the most out of your ad spend and boosting your return on ad spend (ROAS).

When should I move a winning creative into scaling?

When a creative demonstrates consistently strong performance metrics - like ROAS, CTR, or conversion rate - over a period of 21–28 days, it’s a good sign that it’s ready for scaling. At this point, you can begin increasing the budget gradually and testing it with new placements or audience segments. The key is to monitor performance closely throughout the process to avoid ad fatigue and ensure the campaign remains efficient. Scaling incrementally allows you to optimize results without compromising effectiveness.