How Iterative Testing Improves Meta Ad Copy

A 3-step iterative A/B testing approach to optimize Meta ad copy, reduce ad fatigue, and scale winning creatives faster with AI-assisted automation.

Running Meta ads without testing is like gambling with your budget. Iterative testing changes that by focusing on small, data-backed adjustments to improve ad performance. Instead of guessing what works, you test different elements (like headlines or visuals) systematically to find winning combinations. This approach reduces wasted spend, combats ad fatigue, and ensures better results over time.

Key takeaways:

Ad fatigue happens after 2–4 weeks, lowering click-through rates and increasing costs.

False positives can mislead you into scaling ads that don’t perform well long-term.

Iterative testing solves these issues by isolating variables and using A/B tests to identify what works.

Tools like AdAmigo.ai automate this process, enabling faster testing, scaling, and optimization.

The bottom line: Testing systematically and using AI tools can help you save time, reduce costs, and improve ad performance on Meta.

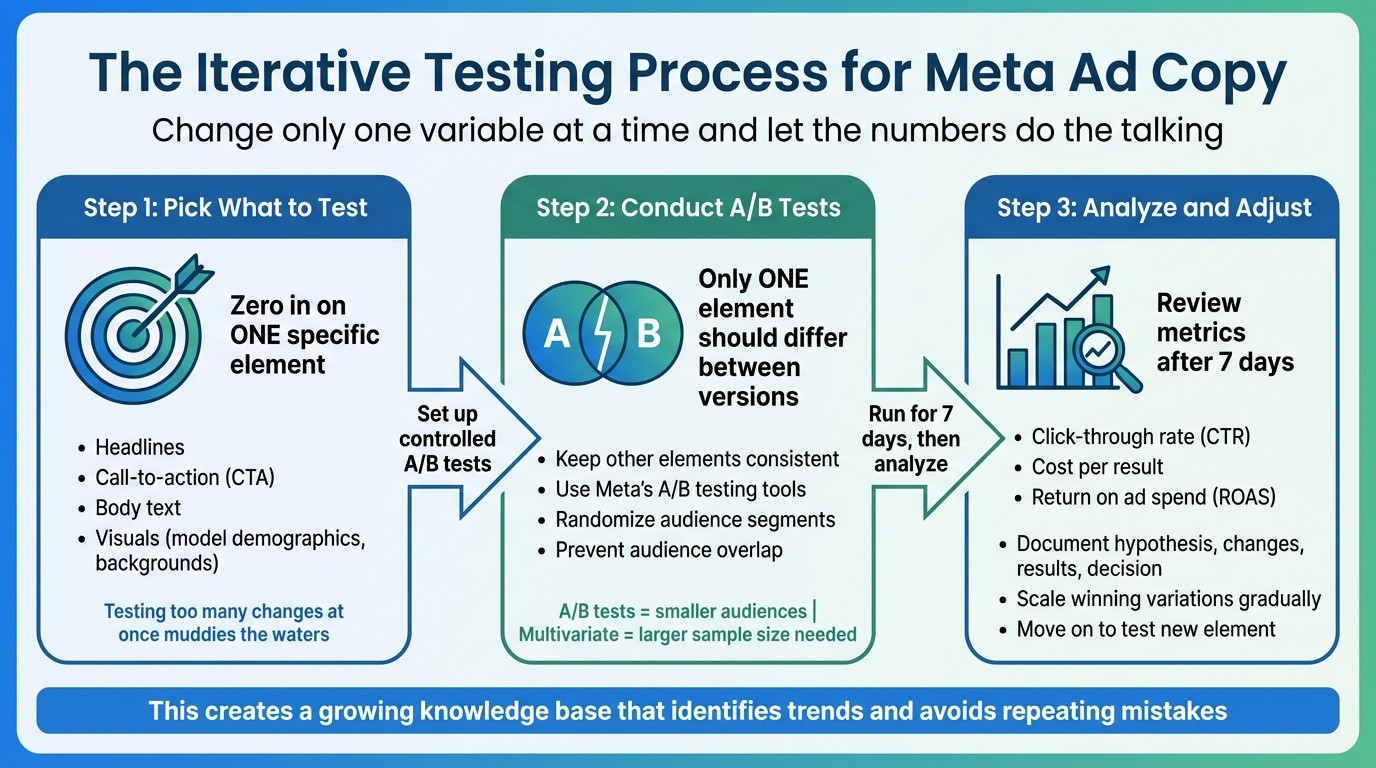

The Iterative Testing Process for Meta Ad Copy

3-Step Iterative Testing Process for Meta Ad Copy Optimization

This process focuses on addressing common ad performance challenges through precise, data-backed adjustments. The secret to effective testing lies in changing only one variable at a time and letting the numbers do the talking. Here's how to approach it step by step.

Step 1: Pick What to Test

Zero in on one specific element for testing. This could be a headline, a call-to-action (CTA), body text, or even the visuals - like experimenting with different model demographics or background designs. Testing too many changes at once muddies the waters, making it hard to tell what actually influenced the results.

Once you've identified the element, set up controlled A/B tests to lower creative testing costs while implementing the change.

Step 2: Conduct A/B Tests

For accurate results, only one element should differ between test versions. For instance, if you're testing two headlines, ensure the image and CTA remain consistent across both ads. Use Meta's built-in A/B testing tools to randomize audience segments and prevent overlap, which could cause your ads to compete against each other.

A/B tests are ideal for evaluating one change with smaller audiences, whereas multivariate tests explore multiple changes but require a larger sample size.

When the data is in, it's time to analyze and refine.

Step 3: Analyze and Adjust

After running the test for 7 days, review metrics like click-through rate (CTR), cost per result, and return on ad spend (ROAS) using an ad performance analyzer. Document everything - your hypothesis, the change you made, the results, and your decision. This creates a growing knowledge base that helps identify trends and avoids repeating mistakes.

If you find a winning variation, scale it gradually while moving on to test a new element. This keeps your ads evolving and improving over time.

How AdAmigo.ai Speeds Up Iterative Testing

AdAmigo.ai takes the well-established iterative testing process and supercharges it with automation. Managing multiple campaigns and creative variations manually can be time-consuming and inefficient. By automating the entire process, AdAmigo.ai helps you test faster, identify top performers, and scale successful strategies without constant manual intervention. This means quicker, data-backed decisions and streamlined optimizations.

AI-Generated Ad Copy Variations

With the AI Ads Agent, AdAmigo analyzes your best-performing ads and even your competitors to create fresh ad copy that matches your brand's voice. Instead of spending hours brainstorming and writing dozens of headlines or CTAs, the AI generates variations based on proven winners in your account. This ensures a steady flow of test-ready content while avoiding creative burnout. Over time, the system refines its understanding of your style, allowing it to produce consistent yet innovative copy that explores new ways to engage your audience.

Automated Improvements with AI Actions

The AI Actions feature provides you with a daily checklist of impactful optimizations. Whether it's pausing underperforming headlines, scaling successful CTAs, or adjusting budgets, every recommendation comes with a clear explanation. You can choose to approve changes manually or activate autopilot mode for hands-free management. The system works around the clock, identifying issues like unusual spending patterns, broken links, or performance drops as soon as they happen.

Fast Testing with Bulk Ad Launch

The Bulk Ad Launch feature allows you to deploy multiple ad variations in just a few minutes. Simply upload your creatives to Google Drive, provide a brief, and AdAmigo handles the rest - generating copy, structuring campaigns, and publishing ads directly to your Meta account. This is a game-changer for testing multiple combinations of headlines, CTAs, or body text at once. Instead of rolling out a handful of ads over several days, you can launch dozens - or even hundreds - immediately, letting the data quickly identify the top performers.

Benefits of Iterative Testing for Meta Ads

Better Metrics Through Data-Driven Changes

Focusing on one element at a time allows you to pinpoint exactly what’s driving performance. By isolating variables, you can identify the specific changes that lead to improvements. Over time, these small, incremental adjustments add up to lower costs per result, higher click-through rates (CTR), and improved ROAS (Return on Ad Spend). This methodical approach not only sharpens your metrics but also helps you build a reliable foundation for future campaigns.

Building a Library of Winning Ad Copy

Each test contributes to a growing collection of ad elements that have been proven to work. This library of headlines, calls-to-action (CTAs), and messaging angles becomes an invaluable resource. When performance dips or you’re rolling out a new product, you can pull from this tested pool instead of starting fresh. A simple spreadsheet tracking metrics like CTR, conversion rate, and cost per conversion can transform into a goldmine of insights. Look for patterns, refine your strategies, and apply winning formulas across campaigns. The 70/20/10 rule is a practical way to manage your budget: dedicate 70% to proven strategies, 20% to emerging ideas, and 10% to experimental testing.

Manual vs. AI-Driven Iterative Testing

The choice between manual and AI-driven testing boils down to efficiency and scale. While manual testing requires significant time and effort, AI-driven solutions streamline the process, delivering faster and more consistent results. Here’s a side-by-side comparison:

Feature | Manual Iterative Testing | AI-Driven Iterative Testing (e.g., AdAmigo.ai) |

|---|---|---|

Testing Speed | Takes days or weeks | Real-time, continuous testing |

Number of Variations | Limited to 3-5 at a time | Handles dozens or even hundreds simultaneously |

Analysis Time | Requires manual review, often delayed | Provides instant, automated insights |

Consistency | Relies on team availability | Operates 24/7 without interruptions |

Scaling Winners | Involves manual budget adjustments | Automatically scales based on performance |

Risk Management | Needs constant monitoring | Includes automatic safeguards like kill switches and budget limits |

AI-driven testing doesn’t replace your strategic input - it enhances it. You still define the goals and approve the creative direction, but the system takes care of the repetitive tasks like monitoring, optimizing, and scaling. This allows you to focus on high-level decisions while achieving results faster than traditional methods ever could.

Conclusion

Low engagement and weak click-through rates call for a more methodical approach: testing each element systematically to uncover what works. The old-school tactic of launching a handful of ads and crossing your fingers just doesn’t cut it anymore. That’s where AI tools step in to bring precision and efficiency.

Traditional manual testing typically limits teams to managing 5–10 active creatives at a time. But with AI-driven platforms like AdAmigo.ai, the game changes. This tool can produce and test 100+ unique ad variations every week. Plus, the cost advantage is hard to ignore: AI-generated scripts run around $2–5 each, compared to the hefty $50–100 per creative charged by agencies. It's not just about saving money - it’s about testing at a scale that was previously out of reach.

Here’s another crucial point: roughly 80% of new creatives fail. That means volume is key to discovering the small percentage of ads that truly perform. AdAmigo takes care of the heavy lifting, automating everything from test launches to budget optimization and scaling successful creatives. You can either let the AI operate autonomously or review and approve every change - it’s entirely up to you.

Getting started is straightforward. Connect your Meta ad account, define your KPIs, and follow a Meta ads conversion optimization checklist to brief the AI on your goals. From there, you’ll receive daily recommendations for campaigns, AI audience profiling, budget tweaks, and fresh creatives. You can review, fine-tune, or publish them automatically. Ultimately, brands that excel on Meta are the ones that test, learn, and adapt faster than the competition. With tools like AdAmigo, you can focus on growth while the AI handles the rest.

FAQs

How do I choose what to test first in Meta ad copy?

When optimizing ads, focus first on high-impact elements like headlines, visuals, and CTAs (calls-to-action). These are the heavy hitters that can make or break engagement and conversions. For headlines, experiment with approaches like testimonials, questions, or statistics to find what connects with your audience.

Run these tests for at least 7 days to collect meaningful data before shifting attention to smaller tweaks. By prioritizing these core components, you’re zeroing in on the elements that have the biggest influence on your ad’s performance.

How long should I run an A/B test to trust the results?

To get meaningful results from an A/B test, you should run it for at least 7 days. This timeframe allows you to gather enough data while accounting for daily fluctuations in user behavior. Make sure each variant receives a fair share of the budget to avoid skewed results.

Aim for about 50 optimization events per variant - this could be clicks, conversions, or any other relevant metric. Hitting this threshold helps ensure your results are both statistically reliable and actionable. The goal is to confidently identify which variant performs better without jumping to conclusions based on insufficient data.

How can I avoid false positives when scaling a “winner”?

To make sure you're not misled by false positives when scaling a successful ad, rely on solid, trustworthy data. Start by running tests for at least 7 days and aim for a minimum of 50 optimization events per variation to validate the ad's performance. Once you've confirmed the results, scale cautiously. Gradually increase your budget in small increments while keeping a close eye on the outcomes.

For added efficiency, consider using tools like AdAmigo.ai. These AI-driven platforms can automate both testing and scaling processes, helping you maintain consistent performance and minimize the risk of errors during optimization.