Meta's Fact-Checking Process for Ads

Three-layer ad review—automation, third-party fact-checkers, and Community Notes—keeps ads honest and AI-transparent.

Meta ensures ads on Facebook and Instagram meet strict guidelines to prevent misinformation, scams, and misleading practices. Here's how it works:

Automated Screening: Ads are reviewed by machine learning systems for policy violations before going live.

Human Review: Over 15,000 human reviewers and independent fact-checkers analyze flagged content for accuracy and compliance.

Transparency Tools: Features like the Meta Ad Library and "AI info" labels provide users with insights into ad origins and whether AI was used in their creation.

Stricter Rules: Political and AI-generated ads require clear labeling and identity verification. Violations can lead to ad account restrictions or bans.

Community Notes: Introduced in 2025, this crowdsourced tool adds context to ads without enforcement power, complementing third-party fact-checkers.

Meta's multi-layered approach combines automation, human oversight, and user-driven tools to maintain trust and accountability in its advertising ecosystem.

How Meta Reviews Ads for Misinformation

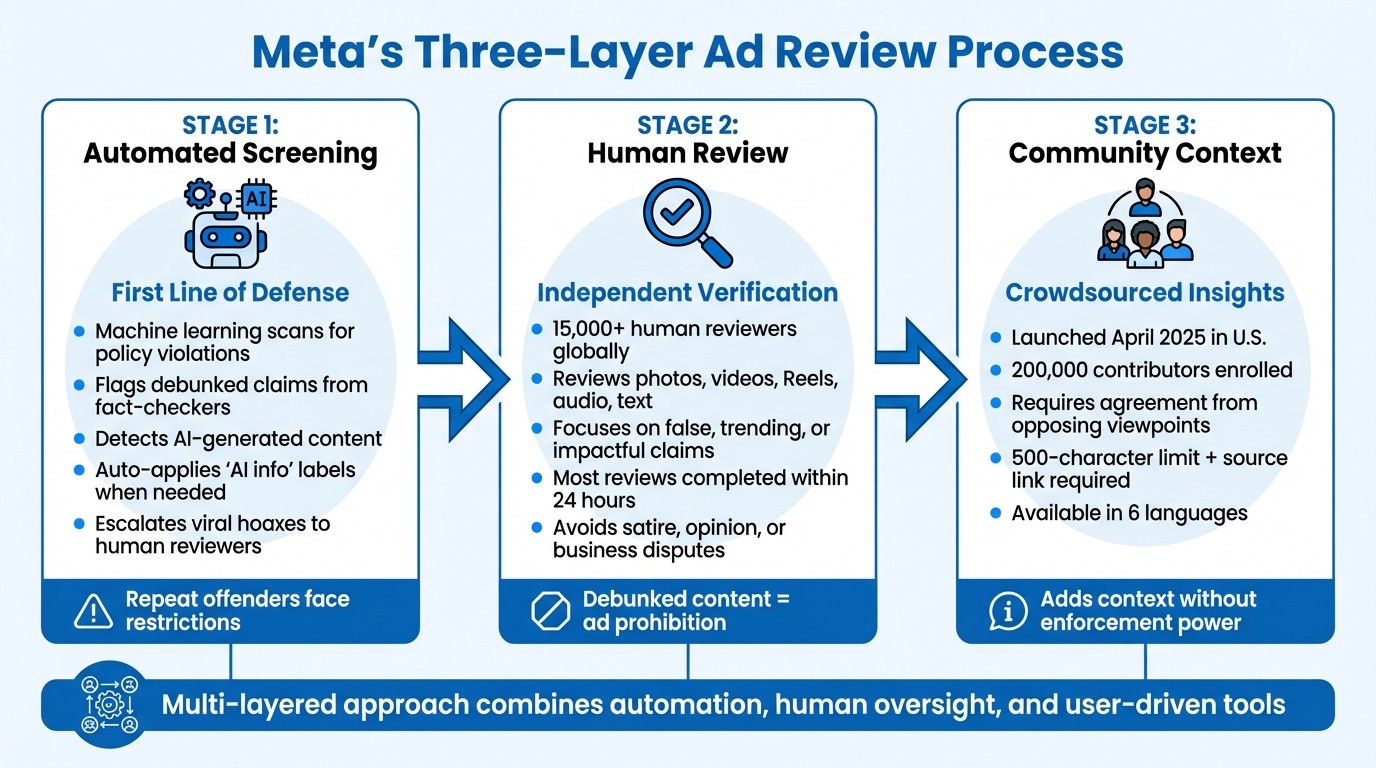

Meta's Three-Layer Ad Review Process for Misinformation Detection

Meta tackles misinformation in ads through a three-layered approach: automated screening, third-party fact-checking, and compliance monitoring through community-driven insights. Each step plays a distinct role in identifying and addressing false information during an ad's lifecycle.

Automated Screening Before Ads Go Live

When an ad is submitted to Meta, the first line of defense is an automated system powered by machine learning. These tools scan ad content for possible violations of Meta's Community Standards and Advertising Standards. This includes flagging claims that independent fact-checkers have already debunked.

The system also identifies ads that use generative AI for significant edits or photorealistic human images. When detected, these ads are labeled with an "AI info" tag displayed alongside the "Sponsored" label. Additionally, any content containing viral hoaxes or proven false claims is escalated directly to Meta’s human fact-checking partners. Advertisers who repeatedly submit flagged ads can face restrictions across Meta’s platforms.

This automated process works hand-in-hand with human oversight to ensure thorough reviews, a core component of Meta ad policy training for internal teams.

Third-Party Fact-Checkers in Ad Review

Independent fact-checkers take over where the automated system leaves off. These organizations examine a range of ad formats - like photos, videos, Reels, audio, and text-only ads - focusing on claims that are false, trending, or could have a significant impact. Meta strictly prohibits ads containing content debunked by these fact-checkers.

However, their reviews avoid areas like satire, humor, business disputes, or content clearly marked as opinion or debate. Advertisers with repeated violations face stricter enforcement measures.

Community Notes for Ad Context

In April 2025, Meta introduced a crowdsourced system called Community Notes, replacing its U.S. third-party fact-checking program. Inspired by X’s open-source algorithm, Community Notes aim to add context to ads without limiting their distribution.

To ensure fairness, the algorithm requires agreement from contributors with differing viewpoints before a note is published. Potential contributors must meet specific criteria: they must be at least 18 years old, have an account in good standing for at least six months, and use two-factor authentication.

"This isn't majority rules. No matter how many contributors agree on a note, it won't be published unless people who normally disagree decide that it provides helpful context." – Meta

Notes are concise, capped at 500 characters, and must include a source link for verification. By early 2025, around 200,000 users in the U.S. had signed up to participate, and the system launched in six languages: English, Spanish, Chinese, Vietnamese, French, and Portuguese.

Rules for Political and AI-Generated Ads

Meta has implemented stricter transparency rules for political and AI-generated ads to combat misinformation and ensure users can clearly identify who is behind these advertisements.

Identity Verification and Disclaimers

Advertisers running ads related to social issues, elections, or politics must complete Meta's identity verification process well in advance. This process may also be triggered if Meta’s systems detect signs of inauthentic behavior.

All such ads must include a "Paid for by" disclaimer and are stored in the Meta Ad Library for seven years. Additionally, advertisers are barred from using lead ad forms to collect sensitive political information, such as voting intentions or candidate preferences.

"Our Ad Library offers a view of all ads currently running across our apps and services. It also offers additional information on ads about social issues, elections or politics, including range of spend, who saw the ad and the entities responsible for those ads." – Meta Transparency Center

Labeling AI-Edited and AI-Created Content

Meta requires clear labeling for any ads that include AI-generated or significantly altered content. Minor edits, like resizing or color adjustments, don’t need a label. However, material changes - such as AI-generated backgrounds or synthetic images - must be disclosed.

Typically, the "AI info" label is accessible through the three-dot menu in the ad’s top-right corner. However, if an ad features an AI-generated photorealistic human, the label must appear prominently next to the "Sponsored" tag. Advertisers are expected to proactively disclose digital alterations when creating ads, as relying solely on Meta's automated detection systems could lead to ad rejection or account restrictions.

"For ads about social issues, elections or politics, advertisers are already required to disclose if the image, video or audio are digitally created or altered, including through the use of third-party AI tools." – Meta Help Centre

This approach ensures that both automated systems and advertiser transparency work together to uphold ad integrity.

Meta Ad Library and Transparency

Meta strengthens transparency by maintaining a public archive of political ads. The Ad Library allows users to search and view active ads for up to seven years, providing details about sponsors, spending, and targeting.

Advertisers should regularly check their Ad Library entries to confirm that "Paid for by" disclaimers and AI labels are accurate. The library also provides insights into competitors' disclosures, helping advertisers align with industry standards. Additionally, the "Info and Ads" section on Facebook Pages displays all active ads linked to a specific page, adding another layer of accountability.

"By building and refining our transparency around how AI impacts the ads users see, we aim to build trust and increase our accountability." – Pedro Pavón, Director, Monetization Policy, Meta

These policies, combined with Meta's thorough review process, reflect the platform’s effort to tackle misinformation and maintain trust in its advertising ecosystem.

Using AI Tools to Stay Compliant

Meta's layered review process is designed to ensure ad compliance, but advertisers can take a more proactive approach by using AI tools. While Meta reviews ads quickly, relying solely on post-approval rejections can lead to account restrictions, limiting your ability to advertise across all Meta platforms.

AI for Compliant Creative Generation

Tools like AdAmigo.ai help advertisers create ads that align with Meta's Community Standards. This platform not only generates compliant ads but also monitors campaigns in real time to avoid triggering Meta's machine learning flags. A standout feature is the Free Meta Ad Policy Checker, which scans Facebook and Instagram ads for risky content - whether text or images - before launch. This early detection can prevent disapprovals that might otherwise impact your account.

Given that Meta employs over 15,000 reviewers to support safety operations, identifying potential issues before they arise is crucial. AdAmigo.ai bridges the gap between creative generation and compliance, streamlining the process while ensuring adherence to Meta's policies.

Automated Ad Scaling Within Policy Limits

Scaling campaigns while staying within policy guidelines can be challenging, but AdAmigo's Bulk Ad Launcher simplifies this process. This tool allows advertisers to launch dozens - or even hundreds - of ads in minutes, all while ensuring compliance. It structures campaigns, generates policy-compliant copy, and applies targeting in line with Meta's transparency rules for AI-generated content.

For example, edits like AI-generated backgrounds require disclosure labels, while minor changes, such as resizing, do not. Meta's systems may reduce delivery for ads flagged as "lower quality", but AdAmigo's optimization engine addresses this by aligning landing pages with ad creative, helping maintain delivery efficiency.

Early Problem Detection with AdAmigo Protect

Continuous monitoring is key to avoiding policy violations, and AdAmigo Protect provides this safeguard. It keeps a close eye on campaigns, identifying delivery issues or potential policy breaches before they become major problems. This is especially important under Meta's policy for repeat misinformation offenders.

"Advertisers that repeatedly post information deemed to be false may have restrictions placed on their ability to advertise across Meta technologies." – Meta Transparency Center

Meta's Account Quality interface allows advertisers to track rejected ads and request reviews if they believe a decision was incorrect. However, by the time a review is requested, valuable time may already have been lost. AdAmigo Protect helps you stay ahead by flagging anomalies - such as unexpected performance drops or unusual behavior - before they escalate into account-level issues.

Third-Party Fact-Checkers vs. Community Notes

Meta's layered approach to addressing misinformation includes two distinct systems: third-party fact-checkers and Community Notes. While both aim to combat false information, they function differently and have unique consequences for advertisers on platforms like Facebook, Instagram, and Threads.

System Comparison

Third-party fact-checkers focus on reviewing specific types of content, including ads, articles, photos, videos, Reels, audio, and text posts. Their work targets claims that are false, trending, urgent, or impactful (such as unapproved health claims) within particular regions. These professionals steer clear of opinions, satire, or disputes, honing in on viral falsehoods and hoaxes.

In contrast, Community Notes relies on user-generated contributions to add context to posts. Unlike third-party fact-checkers, this system doesn’t label content as false or limit its reach. Instead, it provides additional context without enforcement power, especially when dealing with paid advertisements. This distinction makes Community Notes more of a supplementary tool rather than a strict regulatory mechanism.

What This Means for Advertisers

The implications for advertisers are clear. Third-party fact-checkers play a significant role in ad enforcement. Meta prohibits ads containing content debunked by these fact-checkers, and repeated violations can result in restricted ad access. On the other hand, Community Notes, while informative, does not carry the same enforcement weight. Advertisers must be cautious to steer clear of claims that could attract scrutiny from professional fact-checkers, as their rulings have direct consequences on ad delivery and campaign success.

Conclusion: Staying Compliant on Meta

Meta's approach to fact-checking combines automated systems, third-party reviews, and post-live content monitoring. With the support of over 15,000 global reviewers - most completing their evaluations within 24 hours - Meta enforces strict policies to combat misinformation. Advertisers who repeatedly share content flagged by fact-checkers face escalating penalties, which could ultimately lead to being banned from Meta platforms.

Staying compliant doesn’t have to be complicated. Keep a close eye on your Account Quality to catch early rejections, disclose any third-party AI involvement in political or social ads, and ensure your landing pages match the promises made in your ad content. Remember, Meta evaluates the entire customer journey, not just the ad itself.

To simplify compliance and boost performance, consider using third-party AI compliance tools. For example, platforms like AdAmigo.ai can pre-screen content and monitor account health, helping to prevent issues before they arise. Since Meta's review system relies heavily on automated tools to analyze images, text, targeting, and landing pages, adding an AI pre-screening step can help you avoid policy violations before submitting your ads.

Key Takeaways

Here are the main points to keep in mind for staying compliant:

Align your creative process with Meta's transparency rules. If you're using AI to create or edit ads - especially those with photorealistic human images - be sure to apply the proper "AI info" labels to meet compliance standards. For ads related to housing, employment, or credit, self-identify under the Special Ad Category and use only approved targeting options.

Use AI to maintain compliance and optimize campaigns. Tools like AdAmigo.ai can cross-check your ad content against fact-checking databases, flag elements that might require special handling, and ensure your landing pages align with your messaging. Its AI Autopilot feature can continuously monitor your account, flag potential issues, and even implement improvements - either automatically or with your approval. This ensures your campaigns stay compliant while performing effectively.

As Pedro Pavón, Director of Monetization Policy at Meta, explains:

"By building and refining our transparency around how AI impacts the ads users see, we aim to build trust and increase our accountability".

FAQs

Why can my ad be rejected after approval?

Ads on Meta platforms can face rejection even after initial approval if they violate the platform's misinformation policies or community standards. For instance, if fact-checkers flag the content as false or it goes against specific content guidelines, the ad may be restricted or removed entirely.

When do I need an “AI info” label on an ad?

Meta now requires an “AI info” label for ads featuring images or videos created or heavily altered using their generative AI tools. This applies when there are material changes such as generating backgrounds, creating entirely new images, or producing photorealistic human visuals. The goal? To maintain transparency about the role of AI in crafting the content.

How do Community Notes affect paid ads?

Community Notes can influence paid advertisements by introducing user-generated insights and ratings. These contributions might highlight content as misleading or false, which could result in decreased visibility or trigger additional scrutiny under Meta's misinformation guidelines.