Adaptive Targeting: Bias in AI Models

Ad-delivery algorithms can create demographic bias; learn causes, detection methods, and fixes like audits, data checks, and fairness steps.

AI-driven ad targeting can unintentionally lead to biased outcomes. Even when advertisers aim for neutrality, algorithms often skew ad delivery based on factors like ZIP codes, behavioral clustering, or historical data. This can result in discriminatory practices, particularly in sensitive areas like housing, employment, and credit.

Here’s what you need to know:

AI models often rely on proxy data, which can correlate with sensitive demographics.

Bias develops through flawed training data and feedback loops, amplifying disparities over time.

Legal cases, like Facebook’s 2019 Fair Housing Act lawsuit, highlight the risks of unchecked ad delivery systems.

Bias can harm ad performance by excluding potential customers and eroding trust.

To address this, companies should:

Audit training data for imbalances and detect ad metric anomalies that may signal underlying bias.

Adjust algorithms to minimize reliance on demographic proxies.

Conduct AI audits to identify and correct biases in ad delivery.

Use tools like AdAmigo.ai to balance ethical practices with campaign effectiveness.

Bias in AI isn’t just an ethical issue - it’s a business risk. Proactively addressing it ensures compliance, protects brand reputation, and improves outcomes.

Beyond Bias Episode 1: Algorithms in Advertising

How Bias Develops in Adaptive Targeting Algorithms

How Bias Develops and Spreads in AI Ad Targeting Systems

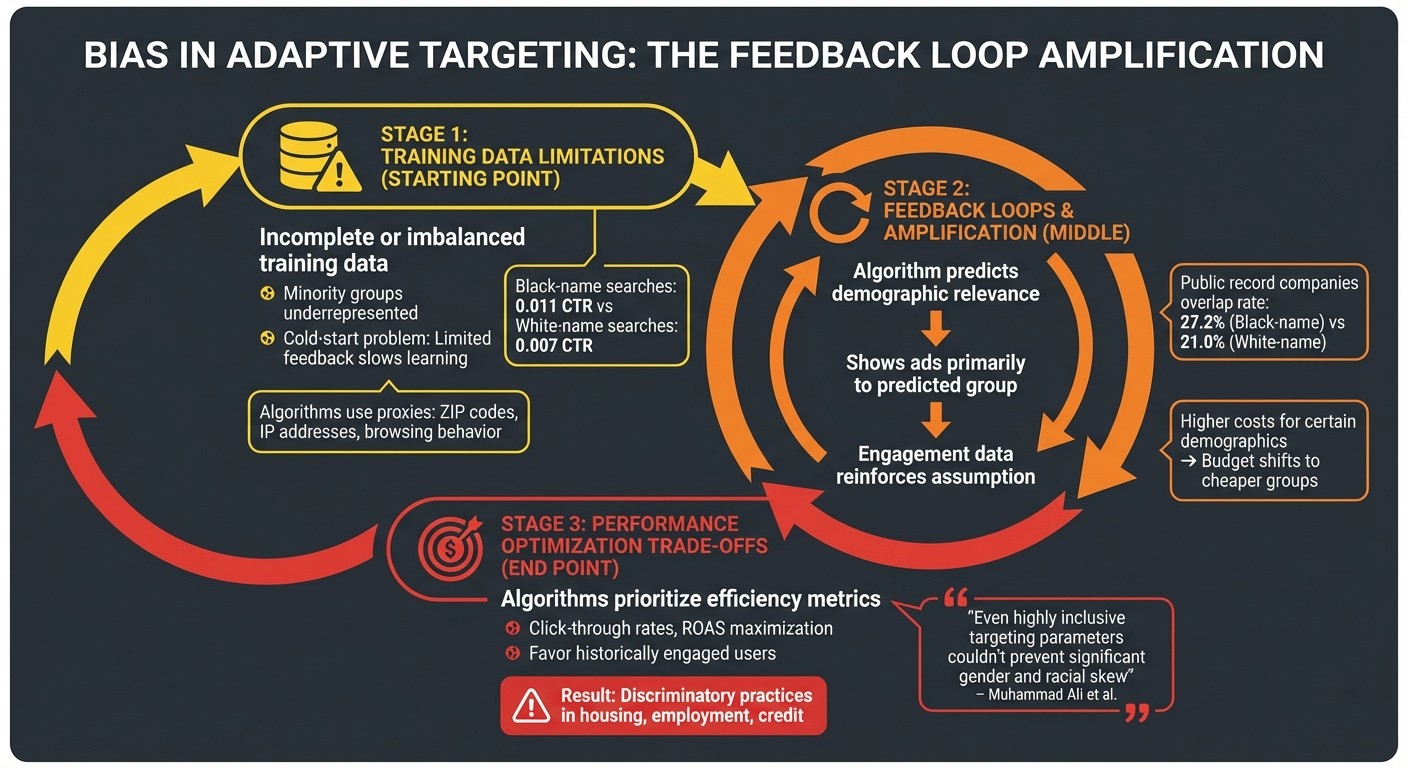

Bias in adaptive targeting algorithms stems from the way these systems learn and optimize. Even with good intentions, advertisers can unintentionally contribute to discriminatory outcomes. The combination of flawed data and the inner workings of algorithms creates a breeding ground for recurring biases.

Training Data Limitations

AI models are only as good as the data they’re trained on. When training data is incomplete or imbalanced, bias naturally follows. Minority groups are often underrepresented, leading to what's known as the "cold-start problem." This occurs when limited feedback slows the algorithm’s ability to learn about these groups effectively. For instance, a study on search advertising revealed that ads for criminal background checks were shown more frequently for names associated with Black individuals, even though those searches had a higher click-through rate (0.011 compared to 0.007 for white-name searches). The lack of sufficient data from minority populations not only skews initial learning but also feeds into feedback loops that amplify bias over time.

"Individuals in a minority segment are proportionately more likely to be test subjects for experimental content that may ultimately be rejected by the platform." - Anja Lambrecht and Catherine Tucker

Even when advertisers remove explicit factors like race or gender, algorithms often rely on proxies such as ZIP codes, IP addresses, or browsing behavior, which indirectly correlate with demographic traits.

Feedback Loops and Bias Amplification

Bias in adaptive systems doesn’t just reflect the data it’s trained on - it can grow through feedback loops. When an algorithm predicts that a particular demographic is more relevant for an ad, it shows the ad primarily to that group. The resulting engagement data reinforces the algorithm’s assumption, creating a cycle that amplifies the original bias.

Take competitive bidding as an example: public record companies saw an average overlap rate of 27.2% for Black-name searches compared to 21.0% for white-name searches. This demonstrates how algorithms can skew delivery patterns systematically. Additionally, higher costs for certain demographics lead algorithms to shift budgets toward less expensive groups in an effort to maximize clicks or impressions. These patterns of reinforcement make it challenging to balance performance goals with fairness by setting effective guardrails.

Performance Optimization Trade-offs

Bias doesn’t just affect fairness - it also shapes ad performance outcomes. Algorithms prioritize efficiency metrics like click-through rates or return on ad spend, but this focus can unintentionally result in discriminatory practices. For example, algorithms often favor users who have historically engaged with similar content, which can limit opportunities for certain groups in areas like employment or housing. Research on Facebook’s ad delivery system found that even highly inclusive targeting parameters couldn’t prevent significant gender and racial skew in employment and housing ads.

"Our results demonstrate previously unknown mechanisms that can lead to potentially discriminatory ad delivery, even when advertisers set their targeting parameters to be highly inclusive." - Muhammad Ali et al., Researchers

What may look like smart optimization - better click-through rates and lower costs - can hide deeper issues. Algorithms that chase efficiency often exploit correlations that improve metrics, regardless of the social consequences. Without considering fairness, these systems risk reinforcing inequalities under the guise of performance improvements.

How to Detect and Measure Bias in AI Models

Detecting and measuring bias in AI systems is no small task. It requires a careful look at multiple factors - accuracy, fairness, interpretability, and robustness - to identify and address discrimination before it becomes widespread. Bias can be subtle, often hiding in the way algorithms use proxies like ZIP codes or browsing history, which can inadvertently correlate with protected characteristics, making the issue harder to detect. Below, we’ll explore key metrics and methods used to uncover these biases.

Metrics and Benchmarks for Measuring Bias

One of the first steps in detecting bias is measuring skewed delivery. This involves comparing the audience actually reached by an algorithm with the intended targeting parameters, particularly regarding gender and racial representation. For instance, research has shown that Facebook’s ad delivery algorithms can skew housing and employment ads, even when neutral targeting parameters are set by advertisers. This suggests that biases may stem from the platform’s own "relevance" predictions rather than advertiser intent.

Another powerful tool is intersectional analysis. Testing for bias across individual attributes like gender or race is important, but it often misses deeper issues. Significant disparities can emerge when examining the intersection of multiple attributes - such as gender and race together.

"This underscores the need for policymakers and platforms to carefully consider the role of the ad delivery optimization run by ad platforms themselves - and not just the targeting choices of advertisers - in preventing discrimination in digital advertising." - Muhammad Ali et al., Northeastern University

Metrics that track financial optimizations can also reveal bias. For example, in e-commerce studies, algorithms designed to offer discounts to price-sensitive customers were found to disproportionately favor higher-income individuals. These shoppers tended to respond more often to price changes, leading systems to allocate discounts in ways that reinforced existing economic disparities.

Cross-attribute comparisons offer additional insights into hidden inequities that might not show up through standard bias metrics. This process is similar to how AI enhances multivariate testing by analyzing complex data sets to find optimal performance patterns.

Comparing Models Across Sensitive Attributes

To understand bias more deeply, practitioners often compare models by running experiments with neutral targeting parameters. They then measure whether the resulting audience skews demographically. Since direct demographic data is often unavailable, proxy methods are used, such as matching IP addresses to U.S. Census tract data. This helps reveal whether variables like location are acting as stand-ins for protected characteristics such as race or income.

"Because 'fairness' and 'bias' are difficult to universally define, getting into the habit of having more than one set of eyes looking for algorithmic inequities in your systems increases the chances you catch rogue code before it ships." - Alex P. Miller and Kartik Hosanagar, The Wharton School

Formal AI audits are another critical step. These assessments, conducted by internal or external teams, evaluate automated decision-making systems both before and after deployment. A key focus is the delivery phase - the stage where platforms determine which users see which ads - rather than just the initial targeting settings. For example, in 2019, Facebook faced a lawsuit for violating the Fair Housing Act. Its targeting systems allowed advertisers to exclude users based on protected characteristics like race, gender, and age. This legal action led to significant changes in how the platform handles housing, employment, and credit ads.

How to Reduce Bias in Adaptive Targeting

Once you've identified bias in your targeting systems, the next step is to take action. Addressing bias happens at three key stages: before training the model, during the learning process, and after making predictions. Each stage offers unique tools and challenges, giving you different ways to tackle the issue.

Better Dataset Design

Creating fair AI starts with using high-quality, unbiased data. One critical step is identifying proxy variables - attributes like ZIP codes, browsing history, or IP addresses that often align with protected characteristics such as race or income.

Auditing historical data is just as important. Algorithms trained on past customer behavior can unintentionally favor wealthier groups. For instance, higher-income individuals tend to shop more often and respond more strongly to discounts. Without adjustments, systems might end up offering better deals to affluent users, reinforcing existing economic inequalities. Additionally, platform-level factors can complicate things. For example, Facebook's internal "relevance" predictions might skew results, even when targeting parameters are designed to be inclusive.

Algorithm-Level Adjustments

Once you’ve ensured data quality, the next step is fine-tuning the algorithm itself to reduce bias. Building fairness directly into the learning process helps stop bias from taking hold. Constrained optimization techniques, for example, allow platforms to balance ad performance with fairness goals. These methods tweak "relevance" scores so that ads for things like housing or jobs aren’t disproportionately shown - or hidden - from certain demographic groups.

Another effective approach is conducting multi-stakeholder AI audits. These audits bring together internal teams and outside experts to identify blind spots that might otherwise go unnoticed. Regularly removing correlated attributes from customer profiles also helps, ensuring variables tied to ethnicity or socioeconomic status don’t influence results.

Post-Processing Corrections

Post-processing corrections are all about refining the outputs to address bias. For example, reranking can reorder results - like job ads or product recommendations - to ensure fair representation across groups, counteracting skews caused by user behavior patterns. Similarly, threshold adjustment modifies decision boundaries for different demographic groups, helping to balance outcomes when platform optimizations inadvertently create discrimination.

While these corrections might involve trade-offs, such as reduced financial efficiency, they’re critical for avoiding systemic discrimination in areas like housing and employment. After all, the cost of inaction in these domains is far greater than the compromises required to ensure fairness.

Governance and Ethical Oversight

Strong organizational commitment and well-defined governance structures are crucial to avoiding discriminatory outcomes in digital systems.

Transparency and Explainability

Transparent and explainable AI systems play a key role in ensuring accountability, especially when it comes to addressing data and algorithmic bias. Without clear explanations for AI-driven decisions - like why a particular ad was shown to one group and not another - it becomes nearly impossible to hold platforms accountable. By implementing mechanisms that clarify ad delivery decisions, organizations can turn opaque systems into more open and accountable processes.

The issue goes beyond the intentions of advertisers. It's essential for organizations to assess the internal ad delivery processes of platforms. These automated systems decide which users see specific ads, often leading to unintended biases, even when targeting parameters are inclusive. Research has shown significant disparities in ad delivery for sensitive areas such as employment and housing, highlighting the need for scrutiny. Tools like "Transparency-enhancing Ads" (Treads) are gaining attention for their ability to provide stakeholders with insights into these delivery mechanisms.

"This underscores the need for policymakers and platforms to carefully consider the role of the ad delivery optimization run by ad platforms themselves - and not just the targeting choices of advertisers - in preventing discrimination in digital advertising." - Muhammad Ali et al., Researchers

Platforms must also monitor whether "relevance" predictions and AI-driven interest-based optimization strategies unintentionally create demographic imbalances in critical areas like housing and employment. The increasing use of Large Language Models (LLMs) to identify hidden risks in web advertising reflects the industry's acknowledgment that traditional oversight methods often fall short. These transparency efforts not only clarify how AI makes decisions but also support ethical advertising practices, as discussed earlier.

Such measures pave the way for stronger regulatory frameworks, which are explored in the next section.

Regulations and Industry Standards

While technical solutions aim to address fairness at the algorithmic level, regulatory frameworks and industry standards are stepping in to enforce accountability on platforms. Legal actions have underscored this need. For instance, the 2019 Facebook Fair Housing Act case highlighted how ad delivery optimization could violate protections for certain groups. Similarly, a 2015 investigation revealed that the Princeton Review's dynamic pricing system disproportionately charged Asian families higher prices based on ZIP codes, showing how geographic data can unintentionally act as a proxy for racial bias.

These examples are not isolated incidents. Research on ad delivery discrimination has been cited in nine policy sources and 49 policy documents as of early 2026. The study "Discrimination through optimization" alone has been downloaded over 11,500 times and cited 231 times. Such scrutiny is pushing the industry toward adopting formal AI audits - structured evaluations of algorithms for fairness, accuracy, and reliability.

"Given the social, technical, and legal complexities associated with algorithmic fairness, it will likely become routine to have a team of trained internal or outside experts try to find blind spots and vulnerabilities in any business processes that rely on automated decision making." - Alex P. Miller and Kartik Hosanagar, Harvard Business Review

Multi-stakeholder reviews are proving to be effective in identifying weaknesses in automated systems. These standards not only reinforce internal strategies for reducing bias but also help balance ethical considerations with performance goals. While addressing bias may seem expensive initially, it ultimately safeguards brand reputation and minimizes legal risks tied to discriminatory outcomes.

AdAmigo.ai: Ethical AI in Advertising

As regulations tighten and advertisers feel the pressure to balance performance with fairness, incorporating ethical safeguards into ad optimization has become crucial. This builds on earlier discussions about how biases can creep into adaptive targeting algorithms, impacting fairness. AdAmigo.ai steps into this space as an AI media buyer for Meta ads, delivering measurable results while prioritizing accountability. Its features reflect a commitment to integrating ethical oversight into adaptive targeting systems.

Bias Mitigation Features in AdAmigo.ai

AdAmigo.ai tackles bias with a multi-layered approach to oversight. One key feature is its continuous AI auditing system, designed to proactively identify and address skewed ad delivery. These audits provide real-time insights and corrective actions, ensuring campaigns stay on track.

The platform's "AI Actions" feature offers detailed explanations for every suggested optimization. According to G2-verified users, these insights clarify the reasoning behind each adjustment. Additionally, AdAmigo.ai monitors Meta's ad distribution to detect and address unintended demographic imbalances. This dual-monitoring system is designed to catch situations where platform-level optimizations might inadvertently cause bias, even when targeting settings seem neutral.

How AdAmigo.ai Balances Performance and Ethics

AdAmigo.ai doesn't just focus on fairness - it also delivers strong performance. It offers two operational modes to cater to different campaign needs. The Full Autopilot mode emphasizes scale and efficiency with hands-off, AI-driven management. On the other hand, the Semi-Autonomous mode allows for manual oversight with conditional budget automation rules, making it ideal for campaigns in sensitive areas where demographic balance is a key concern.

Users have reported significant results, including up to a 30% performance boost and ROAS increases of up to 83%, all while adhering to ethical guidelines. As Nadia Toffar put it, "What others promised, AdAmigo.ai delivered. True AI, automation, and results." The platform's ability to implement changes via chat commands, combined with detailed explanations for each decision, creates a transparent accountability trail. This ensures that both performance goals and ethical standards are upheld.

Conclusion: Balancing Performance and Ethics in AI Targeting

Adaptive targeting algorithms are powerful tools for advertisers, but they’re not without their challenges. While AI retargeting enables personalization at scale, it also introduces these complex ethical considerations. Even with neutral targeting settings, bias can creep in. For instance, a 2019 study revealed that Facebook's internal optimization resulted in 95% of a secretary job ad being shown to women, while 75% of a janitor job ad was delivered to Black users.

Ignoring such biases doesn’t just harm consumer trust - it can also lead to significant legal and reputational risks. Consider Facebook’s 2019 case involving the Fair Housing Act or Princeton Review’s 2015 dynamic pricing controversy. These examples highlight that bias in adaptive targeting isn’t just a hypothetical issue; it has real-world consequences.

To address this, advertisers need to go beyond reviewing targeting inputs. They must also examine how ads are actually delivered. As Muhammad Ali and his research team pointed out:

"This underscores the need for policymakers and platforms to carefully consider the role of the ad delivery optimization run by ad platforms themselves - and not just the targeting choices of advertisers - in preventing discrimination in digital advertising".

Routine audits of ad delivery outcomes can help identify and correct discriminatory patterns early. Tools like AI audits and transparent scoring systems, such as those provided by AdAmigo.ai, offer practical ways to align business goals with ethical responsibilities.

The tension between achieving performance and ensuring fairness doesn’t have to be a zero-sum game. By adopting transparent relevance scoring, demanding detailed delivery reports across sensitive attributes, and holding platforms accountable, advertisers and policymakers can create a more equitable digital advertising space. As researchers from Harvard Business Review wisely noted:

"Managers who anticipate these risks and act accordingly will be those who set their companies up for long-term success".

FAQs

How can “neutral” ad targeting still become discriminatory?

Neutral ad targeting might sound fair, but it can unintentionally lead to discrimination due to how algorithms optimize ad delivery. Even when settings are designed to be neutral, platforms often skew ad exposure toward certain demographics - like specific genders or racial groups. Why? Algorithms are programmed to prioritize factors like market trends, financial performance, or perceived relevance, which can result in biased outcomes without any deliberate intent.

What are the fastest ways to measure bias in ad delivery?

To quickly assess bias in ad delivery, you can:

Analyze demographic attributes: Examine how different demographic groups are targeted and served ads.

Use paired ads: Compare similar ads to spot disparities in delivery across various groups.

Adjust for inference errors: Account for inaccuracies in how user attributes are inferred.

Another effective approach is evaluating ad impression variance with fairness metrics, such as the Variance Reduction System (VRS) employed in Meta’s ad system. These strategies allow for efficient identification and mitigation of potential biases.

How do you reduce bias without hurting ROAS?

Reducing bias in AI-driven ad targeting while keeping ROAS intact requires a thoughtful approach. One effective method is implementing fairness-aware systems that strike a balance between equitable exposure and business objectives. For instance, re-ranking ads to reduce demographic disparities can promote fairer delivery while still maintaining strong engagement levels. Regular auditing and applying fairness constraints are also crucial steps. These measures help address bias, ensuring ad delivery remains inclusive while safeguarding essential metrics like ROAS.