Why Baselines Matter in Creative Testing

Compare new ads to your account baselines — not industry averages — to spot winners faster, reduce wasted spend, and lift ROAS.

When testing new ad ideas, comparing them to your own past performance (baseline) is far more useful than relying on general industry averages (benchmarks). Baselines are specific to your audience, product, and brand, making them the most accurate measure of success. Here’s why they’re essential:

Better decisions: Without baselines, you risk wasting money on ads that seem promising but don’t deliver results.

Faster action: Baselines help you pause underperforming ads quickly, saving budget and time.

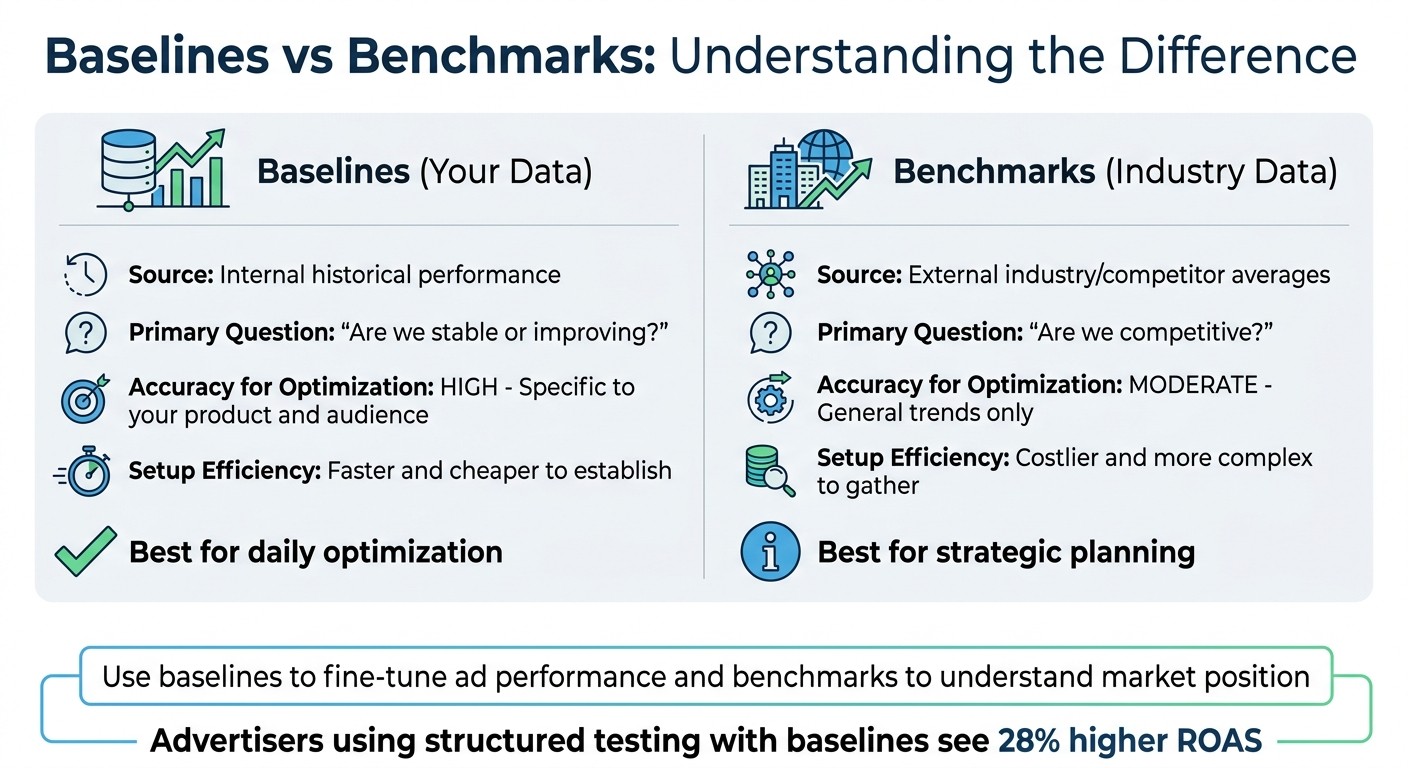

Higher returns: Advertisers using structured testing with baselines see 28% higher ROAS (Return on Ad Spend).

Personalized insights: Unlike benchmarks, baselines reflect your actual performance, not general trends.

To set up baselines, track key metrics like CTR (Click-Through Rate), CPA (Cost Per Acquisition), and ROAS over at least 7 days. Use tools like AdAmigo.ai to automate baseline tracking and creative testing, ensuring decisions are always data-driven.

Creative Testing Strategy Masterclass in 2026

What Are Baselines and Why Do They Matter?

Baselines vs Benchmarks: Key Differences in Ad Performance Tracking

What Baselines Mean in Advertising

A baseline is essentially the historical performance data from your ad account. It serves as a reference point to measure how your current campaigns are performing. To establish a reliable baseline, you typically need to run a campaign for at least seven days, gathering consistent data on key metrics like CTR (Click-Through Rate), CPC (Cost Per Click), CPA (Cost Per Acquisition), ROAS (Return on Ad Spend), and frequency. This data becomes your baseline.

In simple terms, a baseline helps answer: "How is our system performing right now?". It reflects the current performance of your ads within the context of your audience, product, and brand. When you introduce a new creative, its performance is measured against this baseline to determine whether it actually delivers better results.

For instance, if your baseline shows specific CTR or CPC levels, a new creative needs to outperform those benchmarks - typically by achieving about 25–35% higher CTR and 15–20% lower CPC - to be deemed successful. Without this baseline, it’s easy to misinterpret small changes as significant improvements.

Once you’ve established your baseline, you can then compare it to external benchmarks to gain a broader perspective.

Baselines vs. Benchmarks: What's the Difference?

Understanding baselines is an important step toward differentiating them from industry benchmarks. While benchmarks refer to external metrics like industry averages or competitor performance, baselines are all about your internal data. Benchmarks answer the question: "How do we compare to others in the market?" They’re helpful for strategic planning but may not be as effective for daily optimization since they represent general trends rather than your unique conditions.

On the other hand, baselines are personalized, reflecting your historical performance under your specific circumstances. They’re quicker and less expensive to establish. As performance expert ngocninhhd puts it:

"Baselines ask, 'Are we stable?' Benchmarks ask, 'Are we competitive?' You need both answers. One without the other leaves blind spots."

Here’s a side-by-side comparison to clarify:

Feature | Baselines (Your Data) | Benchmarks (Industry Data) |

|---|---|---|

Source | Internal historical performance | External industry/competitor averages |

Primary Question | "Are we stable or improving?" | "Are we competitive?" |

Accuracy for Optimization | High - specific to your product and audience | Moderate - general trends only |

Setup Efficiency | Faster and cheaper to establish | Costlier and more complex to gather |

The takeaway? Use baselines to fine-tune your ad performance and benchmarks to understand your market position. When testing new creatives, your baseline remains the most critical metric for gauging success.

How to Set Up Baselines for Creative Testing

Which Metrics to Use for Baselines

To establish a solid baseline, focus on key metrics like Return on Ad Spend (ROAS), Cost Per Acquisition (CPA), Click-Through Rate (CTR), and Cost Per Click (CPC). These provide a clear snapshot of your current performance, making it easier to evaluate new creative ideas effectively.

It's also helpful to track additional metrics, such as the thumb-stop ratio (3-second view rate), hold rate, video completion rate, and engagement rate. These supplementary data points can explain why a creative performs the way it does. For instance, if an ad has a strong CTR but struggles with CPA, these metrics might reveal that while users are clicking, they aren’t engaging further.

One tip: prioritize 1-day or 7-day click attribution over view-through attribution. View-through attribution can skew results by attributing conversions from other channels.

Once you've defined your metrics, dive into historical data to set baselines that reflect your actual performance.

How to Analyze Historical Data

Use a 7-day campaign with proven high-performing ads to establish consistent benchmarks for CTR, CPC, CPA, ROAS, and frequency.

When analyzing past performance, pay attention to your account's hit rate - the percentage of tested creatives that turn into winners. Most accounts see hit rates between 10% and 30%, which can help you set realistic goals.

For deeper insights, evaluate historical data at the element level instead of just looking at the ad as a whole. Tagging elements like hook format, call-to-action, and tone can help you identify patterns and pinpoint what drives success. This method ensures your future tests are more informed and targeted.

Using AdAmigo.ai to Build Baselines

Once you’ve reviewed your historical metrics, automation tools can help streamline the process of maintaining accurate baselines.

AdAmigo.ai's AI Autopilot simplifies baseline management by continuously auditing your Meta ad account and tracking key performance metrics. It analyzes your historical data, identifies current performance benchmarks, and updates them automatically as your campaign evolves.

The platform also features an AI Chat Agent, which makes baseline analysis feel like a conversation. You can ask questions like, “What’s my current baseline CPA for cold traffic?” or “How does this week’s ROAS compare to last month’s baseline?” and get instant, data-driven answers. When testing new creatives, AdAmigo.ai flags ads that outperform your benchmarks by 25% to 35%, a common threshold for identifying winners.

Additionally, AdAmigo.ai monitors your account for performance shifts that could impact your baseline. For example, if your top-performing ads begin to fatigue (evidenced by frequency exceeding 4.0), the AI detects this and adjusts your baselines accordingly. This ensures that your comparisons are always based on the most accurate and up-to-date data, keeping your testing process reliable and effective.

Common Mistakes When Using Baselines

Using Old or Outdated Data

Using outdated baselines can lead to misleading results in your creative testing. If you compare new ads to performance data from months ago, the comparison may no longer be valid. Why? Because past performance often doesn’t align with current conditions.

Changes in regulations and algorithms can quickly render older baselines irrelevant. For example, privacy updates like iOS 14.5 and SKAN 4.0, along with Meta's Andromeda algorithm that now emphasizes creative diversity and quality, have significantly shifted performance metrics.

To stay accurate, it’s essential to refresh baselines regularly. This ensures they reflect evolving algorithms, audience behaviors, and even creative fatigue. Knowing how to spot ad fatigue is critical for maintaining baseline accuracy. Tools like AdAmigo.ai can help by automatically tracking performance shifts and updating your baselines as conditions change. This way, you can make comparisons that actually hold up.

Another common pitfall? Overlooking external factors, which we’ll cover next.

Ignoring External Factors

Even with updated data, baselines can lose their accuracy if external influences are ignored. Things like holidays, major news events, or competitor activities can skew results.

Take Black Friday testing, for instance. If you’re testing during this high-traffic week, comparing it to a baseline CPA from a quiet February is bound to give you unreliable insights. Similarly, a sudden spike in CPM might not signal a failing creative - it could just be a competitor flooding the auction or even a platform-wide fluctuation. Devon Bennett, a Data Scientist & Analyst, explains this well:

"Before comparing Creative A vs Creative B, I first verify that Groups A1 and A2 perform similarly. If they don't, something external is affecting the results - not the creative itself".

To minimize these issues, keep your tests within a 90-day window and track key events like holidays, product launches, or significant news cycles. If identical creatives perform differently, it’s a strong signal that external factors are at play.

How Baselines Improve Creative Testing Decisions

Using Baselines to Test New Creatives

Baselines are a powerful tool for refining creative testing. They help you sift through new ideas, ensuring your budget isn't wasted on underperforming ads. By comparing new creatives to established metrics like Cost Per Acquisition (CPA) or Customer Acquisition Cost (CAC), you can quickly identify which ones are scalable. For example, on platforms like Meta, top-performing creatives often command 80–95% of the daily spend. On the flip side, if a creative captures less than 50% of its allocated budget within 48 hours, it's likely not meeting expectations.

To gather meaningful data, set your daily testing budget at 2–3 times your target CPA. This allows you to achieve 2–3 conversions per day, which is essential for statistical reliability. Marketing expert Angad Singh points out that most ad budgets on Meta aren't wasted due to poor targeting - it's ineffective creative testing that drains resources.

A great case study comes from Data Scientist Devon Bennett, who implemented a baseline-based testing approach during a Q4 campaign. Using replication groups, his team identified a winning "emotional" creative that outperformed historical metrics by 31%, delivering significant cost savings. Bennett explained:

"If random chance gives you a 50% chance of picking the winning creative, any system that gets you to 70% accuracy is worth building".

This kind of data-driven strategy not only improves decision-making but also lays the groundwork for automated systems capable of running tests at scale.

How AdAmigo.ai Automates Creative Testing with Baselines

Automation takes the efficiency of baselines to the next level, making creative testing a non-stop, hands-free process. AdAmigo.ai's AI Autopilot uses baseline data to launch, refine, and manage creative tests without requiring constant oversight. Instead of relying on manual comparisons, the platform audits your account, identifies key opportunities, and aligns optimizations with your KPIs.

With its AI Creative Generation (Ad Factory), AdAmigo analyzes your top-performing ads and competitor trends to produce new creative variations. These are then tested against your benchmarks. If a creative doesn't perform up to par, the system can either pause it automatically or wait for your approval - depending on your settings.

Unlike traditional testing, which might limit you to 3–5 creative variations due to time constraints, AdAmigo can launch and monitor hundreds of variations simultaneously. It tracks early spending patterns, reallocates budgets to high-performing creatives, and removes underperformers in real time.

This automation eliminates the need for manual data crunching and constant adjustments. Running 24/7, the system continuously evaluates creatives against your baselines, optimizing performance while you focus on broader strategy and creative direction. Let the AI handle the nitty-gritty while you steer the ship.

Conclusion

Baselines are the foundation that makes creative testing effective. Without them, you’re left with data that might look impressive but offers little direction for improving future campaigns. By comparing new creatives to your historical performance, you can determine if they’re genuinely better, something generic industry benchmarks just can’t tell you.

Advertisers who stick to a structured testing framework using baselines see 28% higher ROAS compared to those who test without a plan. Marketing expert Angad Singh puts it perfectly:

"The right angle with mediocre execution usually outperforms the wrong angle with excellent execution".

In Meta’s AI-driven advertising system, your account’s internal signals carry far more weight than any broad industry averages. With creative quality influencing 47% of ad performance variability, having reliable baseline data is crucial for helping algorithms optimize and scale the best-performing ads.

This is where AdAmigo.ai steps in. Instead of spending hours manually tweaking campaigns, its AI Autopilot benchmarks new creatives, quickly identifies underperformers, and scales up winning ads. It even includes an AI Creative Generation feature to create fresh variations based on your top performers, helping you avoid creative fatigue. This shift to AI simplifies the process, taking you from manual trial-and-error to data-driven creative optimization.

Ultimately, testing against proven performance metrics removes the guesswork and delivers measurable results. Baseline-driven testing isn’t complicated - it just requires consistency and the right tools. Whether you’re managing campaigns yourself or leveraging AI, the key remains the same: measure against what works for you, not against generic industry standards.

FAQs

How often should I refresh my baselines?

To keep your ads effective and avoid creative fatigue, it's a good idea to refresh your baselines approximately every 10 days. This approach aligns with practices from leading brands, which regularly update their creatives within this window to sustain engagement and optimize performance.

What’s the minimum spend needed to trust a creative test?

To assess the performance of a creative test on Meta, you'll generally need to allocate a budget of $50 to $100. This range provides enough data to make informed decisions. Following best practices, it's recommended to pause creatives that aren't performing well within 48–72 hours or once they hit this spending limit.

How do I control for seasonality and other external factors?

To better understand how seasonality and external factors impact your campaigns, it's important to run structured creative tests. This approach helps identify genuine performance patterns. Tools like AdAmigo.ai can be a game-changer, offering real-time monitoring and adjustments. By leveraging automation, you can reduce the impact of unexpected fluctuations and keep your results steady.